Since our clients run the gamut of non-local, multi-location, hyperlocal and agencies we are constantly working deliverables that:

- Provide valuable data

- Are relatively quick to put together

One of the biggest requests we get is from clients that ask us to examine both their content and the content of their competitors. The most common reasons for this is they are looking for us to tell them where content ranks based on some metrics:

- What content drives traffic

- What content gets links

- What content gets shared

They also want to address any opportunity gaps e.g do they have content that is underperforming in terms of driving any of the above metrics? Are their competitors attacking their content strategy from a different way, and is it successful or not?

In an effort to streamline this process across our business we put together the following document and I’m going to walk you through how you too can quickly and easily provide valuable content audits for your company/clients.

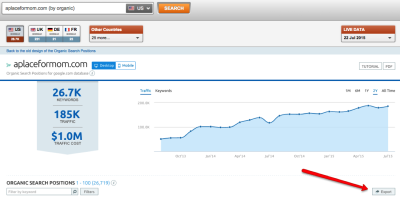

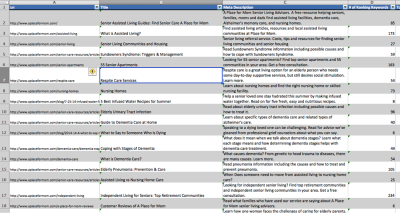

The first place to start is SEMrush. It gives you great directional data that can be used to drive the final report. When you enter a URL in SEMrush make sure you go to the organic positions report, that’s where the good stuff is:

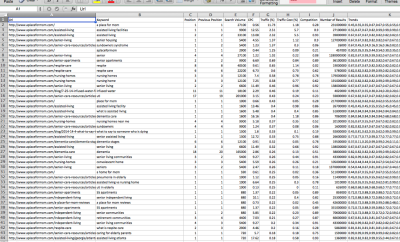

Next export all the data out of this report. Thankfully, this tool has a handy export button:

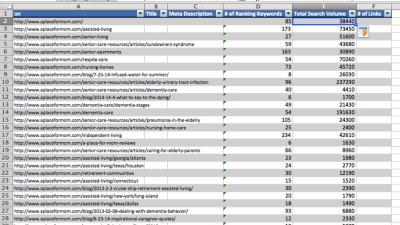

Now that we have our handy dandy data export it’s time to start munging some data in Excel. First thing I always do with SEMrush exports is move the URL column to before column A (just an insert, don’t replace column A data). Generally, we are be going to use the URL for most of our lookups so this makes it possible to lookup any value in the sheet based on URL. For those of you playing along at home, your sheet should look something like this:

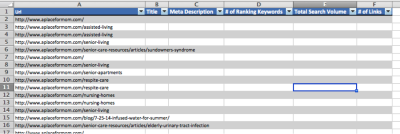

Now is where the real nerd fun begins. Copy column A (URL if you are following my instructions) and put it in a new sheet. Let’s also add our column headers for our final report (we use Title, Meta Description, # of Ranking Keywords, Total Search Volume & Number of Links). After you add those do a quick format as table. So your new sheet should look something like this:

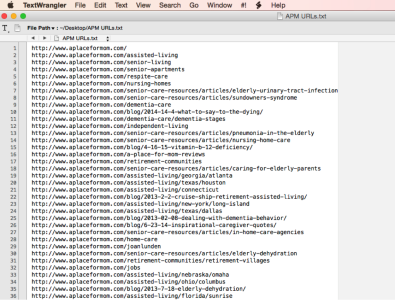

Now highlight the URL column and do a remove duplicates and you should be left with just one of every url that SEMrush has as ranking for a term. The next step is to begin getting the <title> tags and meta description. I prefer using Screaming Frog. Just highlight all the URLs and move them over to a text document and save it. It should look something like this:

Next open up Screaming Frog and change it to list mode by going to Mode -> List. Then click upload list and upload the text file you just made and click start. Now, while the bot gets our meta data, lets go back to our Excel file.

First, we are gonna fill out the “# of Ranking Keywords” column, and we can do that with a simple countif. Basically, we just count the number of times a URL appears in the raw SEMrush data. Since each row of that sheet is the ranking of specific keyword, then every time a URL appears is another keyword that URL is ranking for. For those of you that have been playing along at home, my formula for this is:

=COUNTIF(‘SEMrush Data’!A2:A26613,[@Url])

Next it’s aggregating the search volume for all keywords a URL ranks for. While this may sound complicated, it’s actually just a pretty simple sumif formula. Basically, we are going to use sumif to add up the search volume for every row that the same URL is in (this is a reason that having the URL in the first column is important). Again, for those of you playing along at home, this is what my formula is:

=SUMIF(‘SEMrush Data’!$A$2:$E$26613,[@Url],’SEMrush Data’!$E$2:$E$26613).

Now that we have aggregated all the SEMrush data our sheets should look something like this:

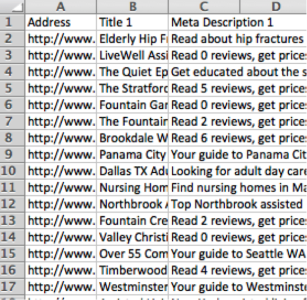

Now it’s time to use that Screaming Frog crawl to get that meta data into this sheet. First things first, export the crawl data out of Screaming Frog. Then we are going to open crawl file and drag it over to be a part of our work book. After that, I like cleaning it up so that the only information in it is what we are going to be using (URL, Title, Meta Description). When done the new sheet should look something like this:

Now we perform a couple of vlookups off the URL in the main sheet to get the meta information over. When done your main sheet should looks something like this:

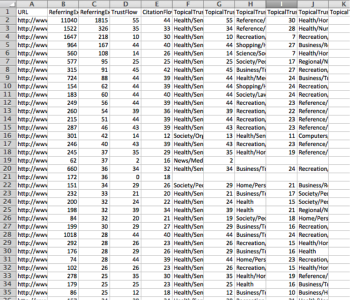

Now, let’s some link metrics! You can use your link tool of choice for this, but we prefer Majestic or AHREFs (they have the best link indexes). Basically all you do is export a list of the pages on the domain and how many link they have pointed at them (all link tools have this ability). Export this data out of your tool and you should have something that looks similar to this (which is from Majestic):

Now it’s just a simple vlookup to move the link data over into our main sheet. For those of you following along, here is my formula:

=VLOOKUP([@Url],’Majestic Link Data’!$A$2:$B$5001,2,FALSE)

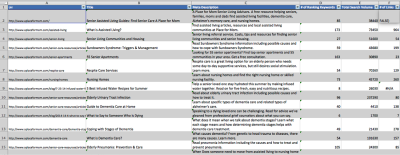

And now my main sheet looks like this:

This is how you can create an actionable content audit in ~15 minutes. It’s great for taking inventory of your clients, look at their competitors and doing research for link-building and content creation.

In a future follow-up we will go over how you might want to suppliment this audit if you have more then 15 minutes and it will include things like adding average rank for the URL across all it’s keywords, adding in actually monthly search traffic via Google Analytics and bringing in social sharing data.

No Comment