#1 Search Engine Ranking Factor: SEO QA

In April of 2007, Rand Fishkin of SEOMoz.org published the results of a survey of top SEOs regarding what they believed were the top search engine ranking factors. It was pretty interesting stuff, but something happened recently that reminded me that all of these top SEOs totally missed the boat – none of them mentioned the most important search engine ranking factor of them all – QA.

QA (aka “Quality Assurance”) is the process by which you check all of the work done on your website before you release it to the rest of the world, including the search engines. QA is hard enough to do on “normal” feature releases, but for some reason whenever there is SEO involved it gets even harder, usually because the team has not adopted SEO as a part of its everyday process. Screwing up QA on SEO features can mean losing a lot if not all of your traffic in the flick of a switch.

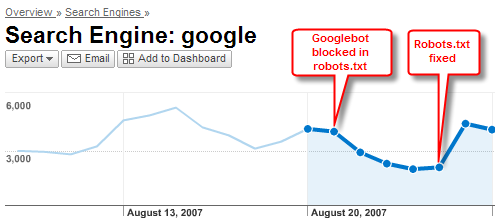

Here’s a perfect example: I noticed one of my clients’ Google traffic take an almost 50% dive from two days before and asked what they had done to the site. They told me “nothing”, but when I looked at the robots.txt file, I saw they had rewritten it in such a way that it blocked Google from crawling the entire site. I quickly alerted them and they corrected the problem, but had I not been looking, they probably would have lost a lot more traffic for a lot longer. The problem was that some of the developers were not following the SEO QA process I had given them. As the CEO later told me, this could have been “an enormous tragedy” for their business.

So say what you will about title tags and external links, I say SEO QA is the #1 search engine ranking factor.

Here’s my standard SEO QA checklist for your enjoyment. Feel free to add more SEO QA ideas in the comments section.

<

p class=”MsoNormal” style=”margin-left: 0.25in”>The Local SEO Guide SEO QA Checklist

The following items should be tested before every new release:

- For dynamic pages does each page type have a unique Title, Meta Description and Meta Keywords tag formula?

- For static pages does each page have a unique Title, Meta Description and Meta Keywords tag?

- Inspect your robots.txt file and make sure that only URLs that you don’t want the search engines to see are listed. Examples of pages you probably don’t want indexed include login, email to a friend, printer friendly pages, most footer pages, etc. If you see “Disallow: /”, this means you are blocking all robots from crawling the entire site. This is not good.

- Run a report of all dynamic page types that have a “noindex” tag and confirm that only page types that you don’t want the search engines to see have this tag.

- Test all URL redirects. Make sure the following redirects are in place

- Non-www version of every URL 301s to www version (or vice-versa)

- URLs that end in / 301 to version that has no / (or vice-versa)

- All mixed case URLs 301 to lowercase versions

- Test version subdomains (e.g. alpha.site.com) either 301 to root domain or else are password protected.

- Make sure any URLs that are being eliminated 301 to the new version of the URL or if there is no new version that they 301 redirect to the root domain or a related directory on the site.

- If you are using a sitemaps xml file to update Google, Yahoo & MSN sitemaps has the xml file been updated to reflect the new changes?

- Run a crawler against your site such as Linkscan to make sure that your pages are not delivering error codes and to see if there are any chain redirects (e.g. 301 to 301 to 301). Avoid chain redirects if possible.

- Create a list of items that have changed that could affect SEO to help quickly diagnose any issues that may result from the new release. Typical items include:

- Addition of or reduction of links on a page

- Rewritten page copy and meta information

- Addition of new pages

- Eliminated URLs

- Redirected URLs